This content originally appeared on Bits and Pieces - Medium and was authored by Yonatan Sason

We build production platforms with AI every day, and we work with teams doing the same with their own stack -Cursor, Claude Code, Copilot. The difference shows up fast. By day two, some codebases are already harder to change than they were yesterday. Others keep getting easier. The difference is never the model. It’s what the code lands in.

The teams we work with that hit a wall? It’s always the same story. Someone generated a module (maybe 200 lines, maybe 600. Doesn’t matter). It worked. Passed tests. Shipped. A few days later someone needs to change it and realizes the only thing that understood this code was the context window that wrote it. Multiply that across a codebase over a few months and you’re looking at a full rewrite.

That module is now a black box. The code isn’t bad. There’s just no structure around it that lets a human or a different AI session touch it safely.

What we mean by “black box”

Not code you can’t read. Code where reading it doesn’t give you enough to change it.

We see the same failure modes over and over:

- No boundaries. A notification system that handles email, SMS, push, and webhooks in one module. Everything touches everything. You want to swap the email provider? Good luck — it shares state with SMS logic, and that relationship isn’t declared anywhere.

- Implicit dependencies. The module imports a user service, a template engine, a queue. How do they connect? The only documentation is the runtime behavior. So to understand it, you either run it or read all of it.

- Missing contracts. What does `sendNotification()` accept? What does it return? What happens on failure? AI-generated code often has clean implementations with no explicit interface. The “contract” is just whatever the current code happens to do.

- Docs that explain nothing. Generated JSDoc: `@param message the message to send`. Yeah, I can see that. What I need to know is why this function exists, what calls it, and what breaks if I change it.

Every AI coding tool we’ve worked with produces at least two of these by default. Most produce all four.

Generation is solved. Day 2 isn’t.

The raw ability to produce working code from a description, we’re past that. What’s not solved is what happens when you need to change, extend, or hand off what was generated.

The metric that matters isn’t time to generate. It’s time to understand.

If AI saves you 10 hours writing a notification system but the next developer spends 40 hours understanding it before they can safely add a webhook provider, you didn’t save anything. You shifted the cost from the person who wrote it to the person who has to live with it. And that second person is usually you, a week later, having forgotten everything.

One black box module is annoying. A whole codebase of them after a few months of AI-first development… that’s a rewrite.

What actually makes code survive

We’ve spent years on this, not academically, but as the core challenge behind Bit. What makes code maintainable? Not readable. Maintainable. Changeable by someone who didn’t write it.

It comes down to structure:

- Explicit boundaries. Every component declares what it is, what it exposes, where it ends. Not by convention — by enforced structure.

- Declared dependencies. If A uses B, that relationship is visible, versioned, trackable. Not buried across 12 import statements.

- Typed contracts. You know what goes in, what comes out, how it fails. Without reading the implementation.

- Documentation that answers “why.” Not what the function does — why it exists, what problem it solves, what depends on it. The stuff that makes a new developer productive in minutes instead of days.

Code without these gets more expensive to touch every single day. It starts on day two and it compounds.

What this looks like in practice

When Hope AI generates a platform, it doesn’t generate into a void. It generates into Bit.

So every component gets structural feedback immediately. Does this compile independently? not the whole app, just this piece? Are the dependencies declared? Do the tests pass in isolation? Is the public API typed? Does the documentation explain the why?

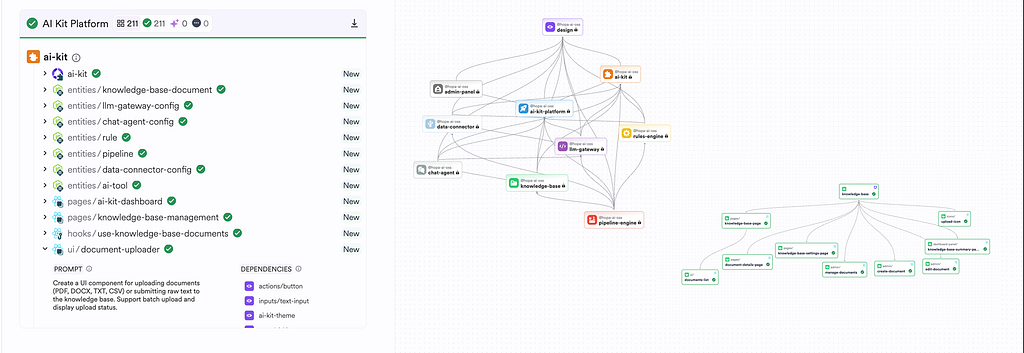

When we generated AI-Kit- 211 components, 9 modules, every component came out with boundaries, tests, docs, and typed interfaces. The prompt didn’t say “add documentation.” The platform required it. The structure isn’t in the prompt. It’s in the environment.

And that’s the real point. The black box problem isn’t an AI problem. It’s an environment problem. AI generates what the environment accepts. If your environment accepts a module with no boundaries and no contracts, that’s what you get. If it requires explicit structure, you get that instead.

That’s why the difference shows up on day two. The teams generating into structure can change things immediately. The teams generating into a blank folder are already accumulating debt.

Audit your own stuff

If you’re generating code with AI (and you should be), run these checks:

- Pick any AI-generated module. Can you explain where it starts and ends without reading the whole thing? Can you change one part without understanding all the other parts? If not, you don’t have boundaries.

- Can you draw the dependency graph of your last three AI-generated features from actual declarations in the code? Not from memory. If you can’t, the next developer definitely can’t.

- Take the main function of an AI-generated module. Without reading the implementation, tell me what it accepts, what it returns, how it fails. If you need to read the code to answer that, your contract is implicit. It’ll break.

- Give a module to a teammate who’s never seen it. Time how long it takes them to make a small, safe change. That number is your actual maintenance cost. It’s probably higher than you think.

So what

The bottleneck was never generation speed. The bottleneck is whether what you generated can survive a team, a timeline, and requirements that keep changing.

Better prompts help. Better models help more. But the biggest lever is the structure your code lands in. Get that right and AI is the most powerful dev tool ever built. Get it wrong and you’re generating tomorrow’s rewrite at record speed.

We built Bit (oss) to be that structure. Hope AI builds on top of it. We ship production software every day and code without structure doesn’t last the week.

If you’re building with AI and feeling the maintenance creep, team@bit.cloud if you want to talk.

AI-Generated Code Has a Shelf Life was originally published in Bits and Pieces on Medium, where people are continuing the conversation by highlighting and responding to this story.

This content originally appeared on Bits and Pieces - Medium and was authored by Yonatan Sason

Yonatan Sason | Sciencx (2026-02-23T12:13:37+00:00) AI-Generated Code Has a Shelf Life. Retrieved from https://www.scien.cx/2026/02/23/ai-generated-code-has-a-shelf-life/

Please log in to upload a file.

There are no updates yet.

Click the Upload button above to add an update.