This content originally appeared on HackerNoon and was authored by Demographic

Table of Links

3 Preliminaries

3.1 Fair Supervised Learning and 3.2 Fairness Criteria

3.3 Dependence Measures for Fair Supervised Learning

4 Inductive Biases of DP-based Fair Supervised Learning

4.1 Extending the Theoretical Results to Randomized Prediction Rule

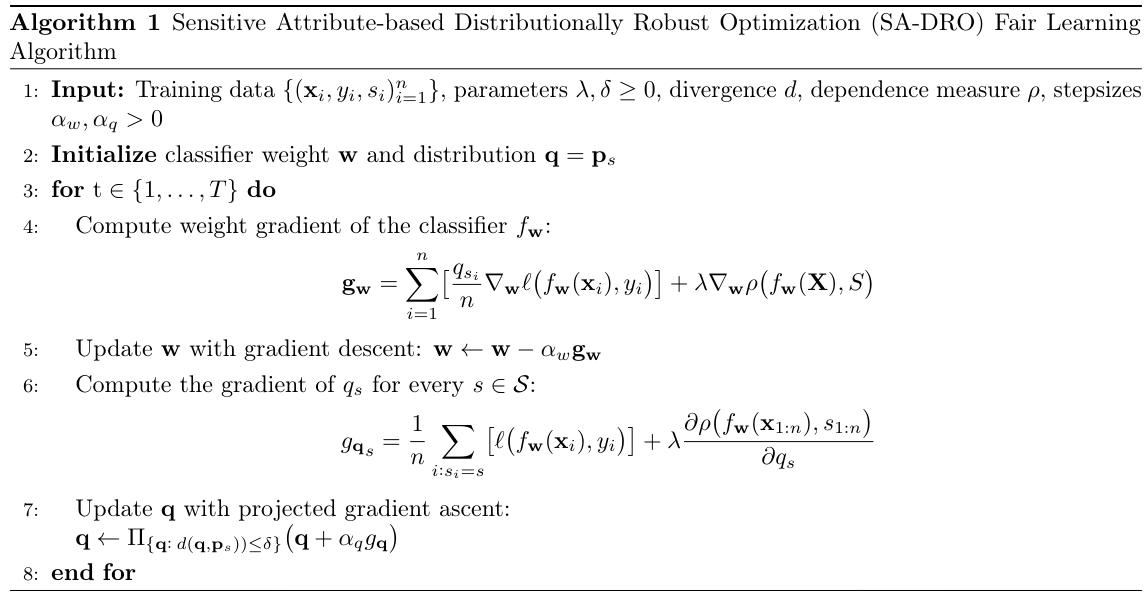

5 A Distributionally Robust Optimization Approach to DP-based Fair Learning

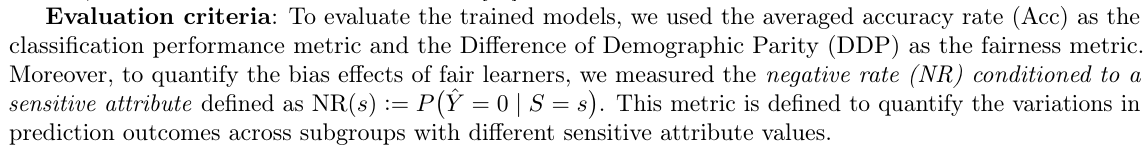

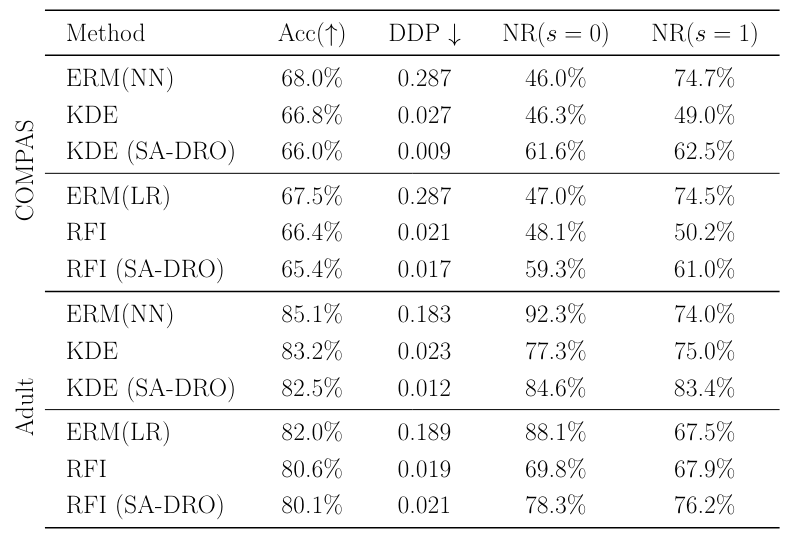

6 Numerical Results

6.2 Inductive Biases of Models trained in DP-based Fair Learning

6.3 DP-based Fair Classification in Heterogeneous Federated Learning

Appendix B Additional Results for Image Dataset

6.1 Experimental Setup

Datasets. In our experiments, we attempted the following standard datasets in the machine learning litera

\ 1. COMPAS dataset with 12 features and a binary label on whether a subject has recidivism in two years, where the sensitive attribute is the binary race feature[1]. To simulate a setting with imbalanced sensitive attribute distribution, we considered 2500 training and 750 test samples, in both of which 80% are from Z = 0 "non-Caucasian" and 20% of the samples are from Z = 1 "Caucasian”.

\

\ 2. Adult dataset with 64 binary features and a binary label indicating whether a person has more than 50K annual income. In this case, gender is considered as the sensitive attribute[2]. In our experiments, we used 15k training and 5k test samples, where, to simulate an imbalanced distribution on the sensitive attribute, 80% of the data have male gender and 20% of the samples are females.

\ 3. CelebA Proposed by [27], containing the pictures of celebrities with 40 attribute annotations, where we considered "gender" as a binary label, and the sensitive attribute is the binary variable on blond/non-blond hair. In the experiments, we used 5k training samples and 2k test samples. To simulate an imbalanced sensitive attribute distribution, 80% of both training and test samples are marked with Blond hair and 20% samples are marked with non-blond hair.

\ DP-based Learning Methods: We performed the experiments using the following DP-based fair classification methods: 1) DDP-based KDE method [6] and FACL [12], 2) the mutual information-based fair classifier [11], 3) the maximal Correlation-based RFI classifier [13], to learn binary classification models on COMPAS and Adult datasets. For CelebA experiments, we used the following two DP-based fair classification methods: KDE method [6], and mutual information (MI) fair classifier [11].

\ In the experiments, we attempted both a logistic regression classifier with a linear prediction model and a neural net classifier.

\ The neural net architecture was 1) for the COMPAS case, a multi-layer perceptron (MLP) with 4 hidden layers with 64 neurons per layer, 2) for the Adult case, an MLP with 4 hidden layers with 512 neurons per layer, 3) for the CelebA case, the ResNet-18 [28] architecture suited for the image input in the experiments.

\

\

\

:::info This paper is available on arxiv under CC BY-NC-SA 4.0 DEED license.

:::

[1] https://github.com/propublica/compas-analysis

\ [2] https://archive.ics.uci.edu/dataset/2/adult

:::info Authors:

(1) Haoyu LEI, Department of Computer Science and Engineering, The Chinese University of Hong Kong (hylei22@cse.cuhk.edu.hk);

(2) Amin Gohari, Department of Information Engineering, The Chinese University of Hong Kong (agohari@ie.cuhk.edu.hk);

(3) Farzan Farnia, Department of Computer Science and Engineering, The Chinese University of Hong Kong (farnia@cse.cuhk.edu.hk).

:::

\

This content originally appeared on HackerNoon and was authored by Demographic

Demographic | Sciencx (2025-03-24T05:54:11+00:00) How to Test for AI Fairness. Retrieved from https://www.scien.cx/2025/03/24/how-to-test-for-ai-fairness/

Please log in to upload a file.

There are no updates yet.

Click the Upload button above to add an update.